AWS Developer Tools Services Part 2: AWS CodeBuild

Introduction

This post is part of my mini-series about AWS Developer Tools Services

- Part 1: AWS CodeCommit

- Part 2: AWS CodeBuild

- Part 3: AWS CodeDeploy

- Part 4: AWS CodePipeline

![]()

AWS CodeBuild

As the name implies, AWS CodeBuild is Amazon’s build service that compiles your code, runs unit tests etc. As this is quite broad and changes from project to project and technology to technology it requires some customization.

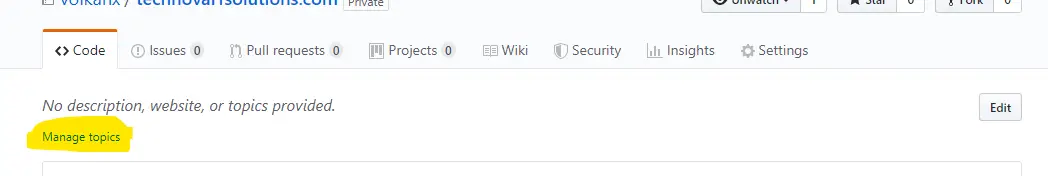

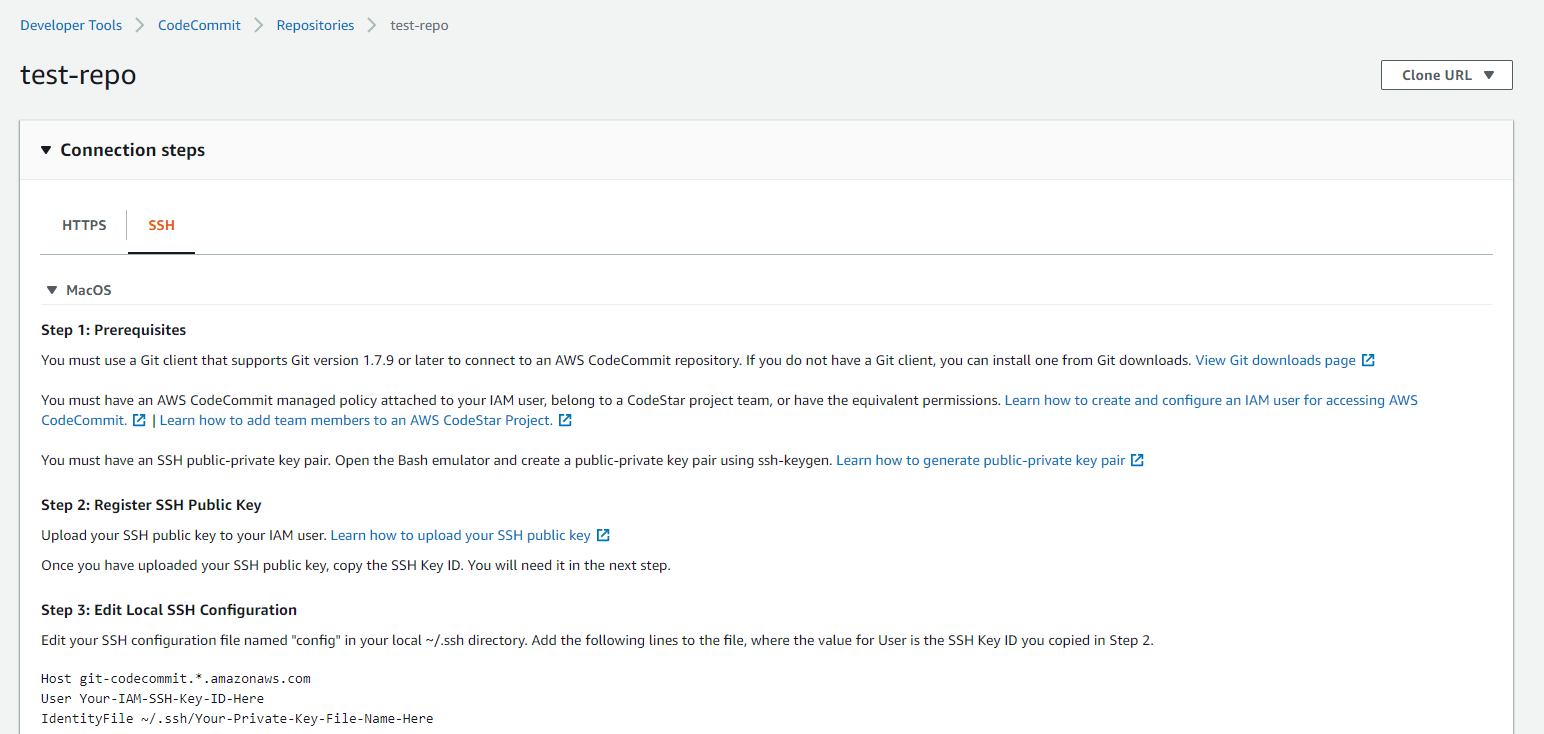

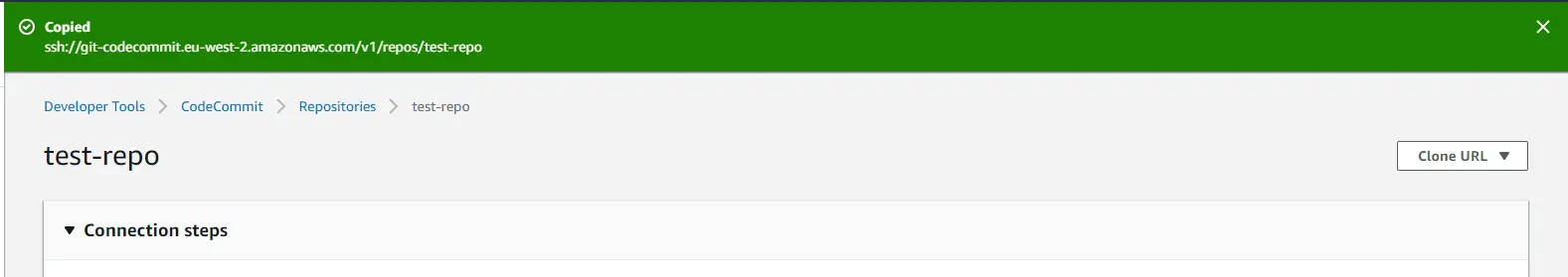

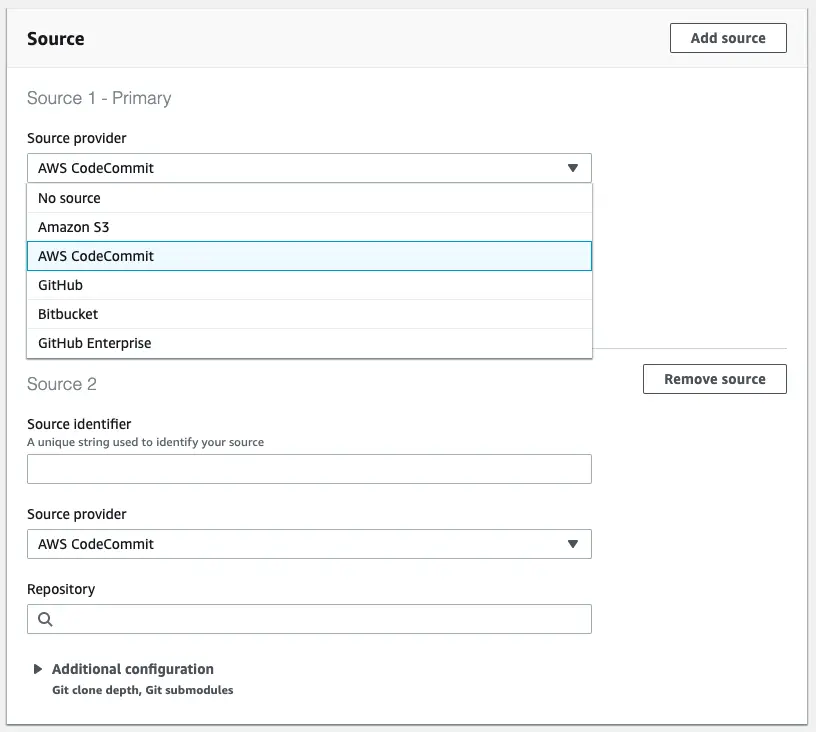

Hook up the source code

First step is to select the source. It’s quite flexible in the sense that it integrates with top Git providers such as GitHub and Bitbucket. The easiest way is to use CodeCommit because of the direct integration between services. No extra authentication is required to connect to the repository.

It also support Amazon S3 as source but I cannot think of a practical use for it.

Another interesting feature is that it supports multiple input sources. If your build depends on source from multiple repositories I would consider merging them into a single repository but if that’s not possible this is a nice option to make it work.

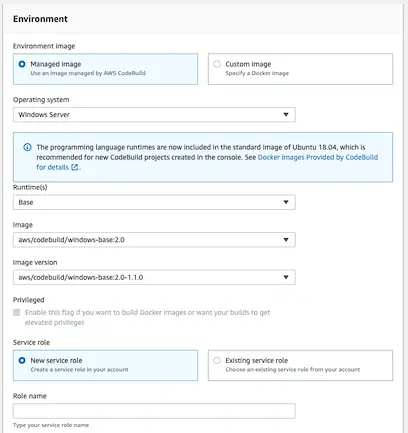

Hook up the Docker image with build tools

The build process takes place in a Docker container. By default AWS provides their own build images for different operating systems:

A couple of notes on build images:

- AWS provides some build images but we can provide our own images as well.

-

How the managed images configured can be found here: Docker Images Provided by CodeBuild. They have build tools installed on these images for all runtimes they support. As of this writing dotnet core 3.0 is not supported in any of the images though.

- The image availability may differ between regions. For example N.Virginia regions has 3 supported OS images whereas London region doesn’t have Windows image.

- Running this build process is not free and pricing details can be found here. If you’re running long build processes that you run frequently these costs can quickly add up.

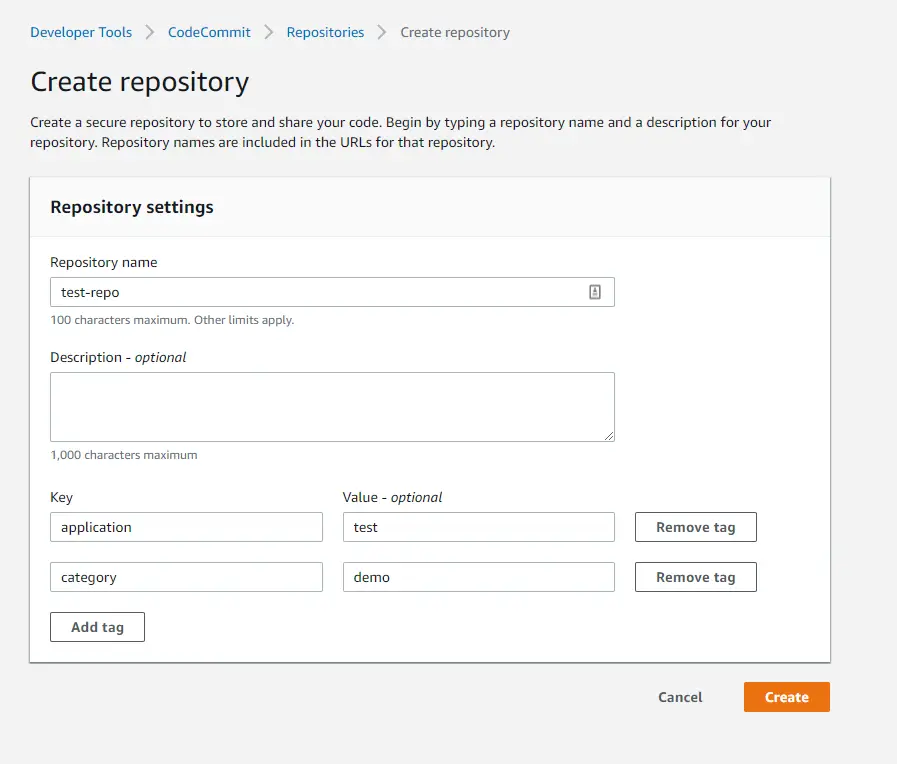

Configuring the build

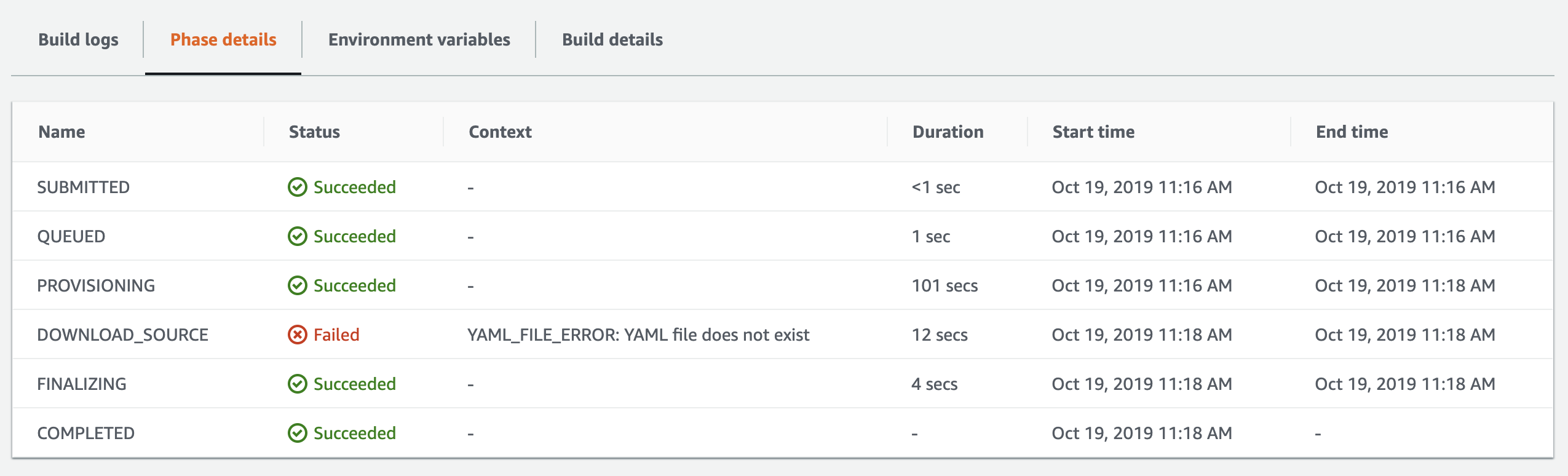

If you run the build after selecting the source and the build image, you get the following error:

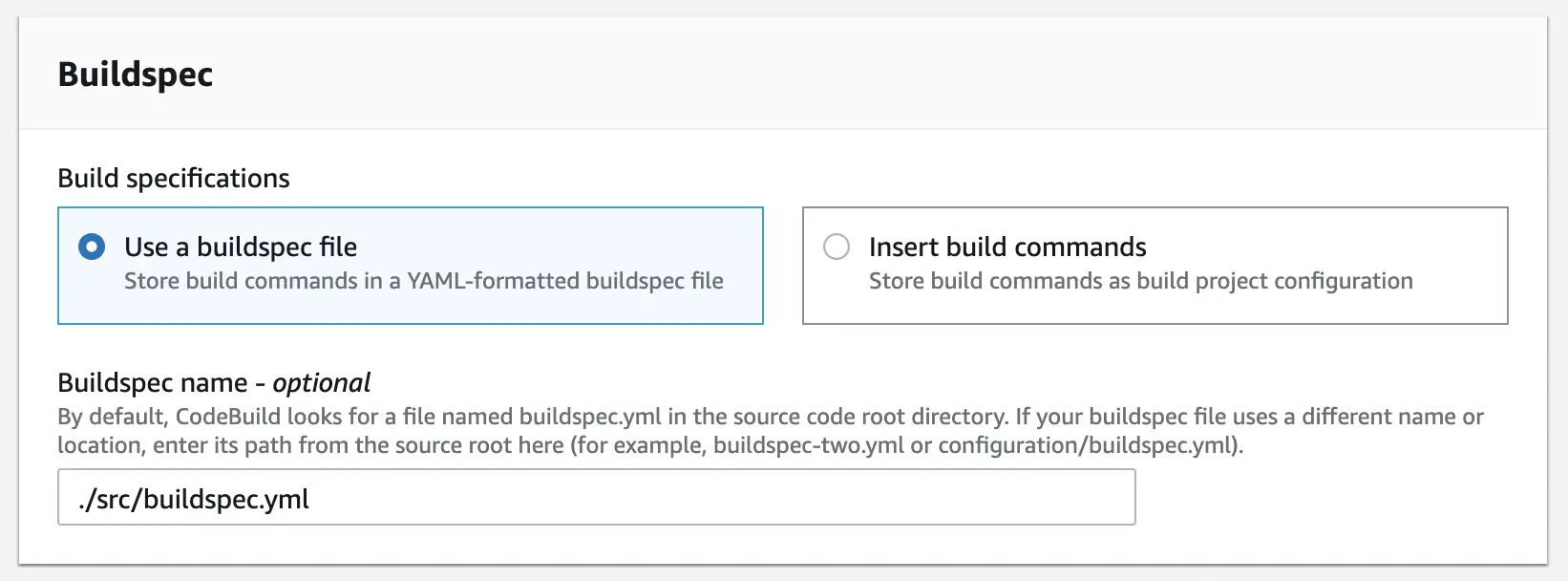

By default CodeBuild requires a special configuration file called buildspec.yml to reside at the root of the repository:

In my example, the folder structure is like this:

[Root]

[Src]

[Sample.UI.Web]

[Sample.Core]

[Sample.Core.UnitTests]

Sample.sln

buildspec.yml

I’m using a dotnet core 2.2 project on Windows-based Docker image and my buildspec.yml looks like this:

version: 0.2

phases:

build:

commands:

- cd ./src

- dotnet restore

- dotnet test

- dotnet publish Sample.UI.Web -c Release -o ../output

artifacts:

files:

- '**/*'

base-directory: ./src/output

Running the unit tests

As you can see in my buildspec file I’m running the unit tests using dotnet test command. There’s no built-in facility in CodeBuild to run unit tests as it’s a general-purpose tool. You have to provide your own mechanism to achieve this.

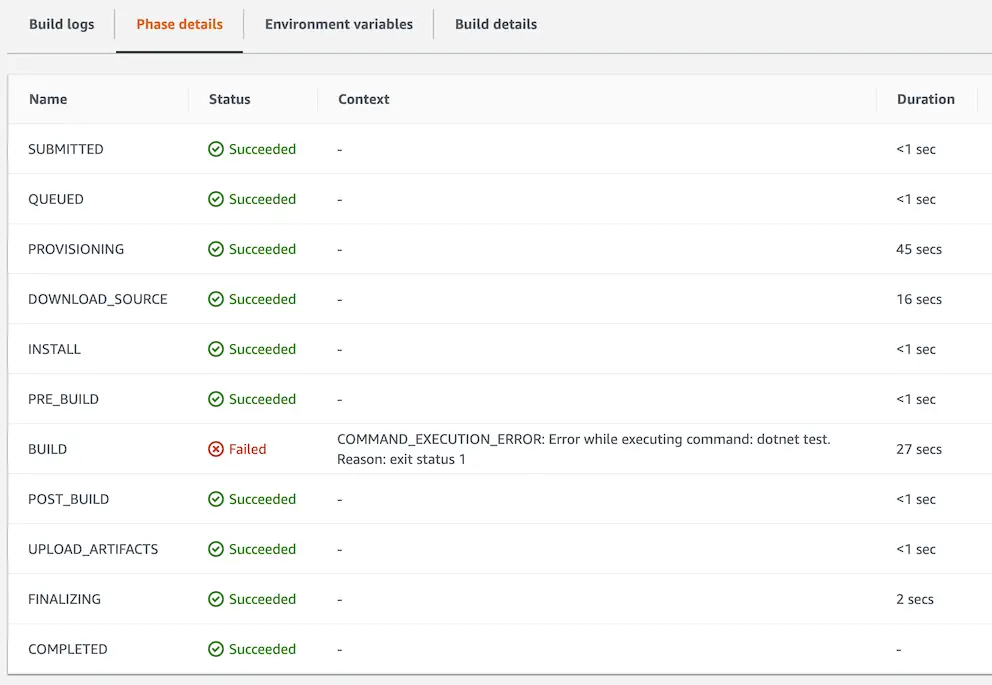

To test running the unit tests I deliberately broke a test and checked in the code. After running the build again I got this result:

So it actually runs the tests and fails the build if there’s a failing test. Ideally you want to run the tests whenever there are code changes. Looks like currently this is possible via CodePipeline service. I’ll explore that later and will cover running the build and unit tests automatically.

Artifacts

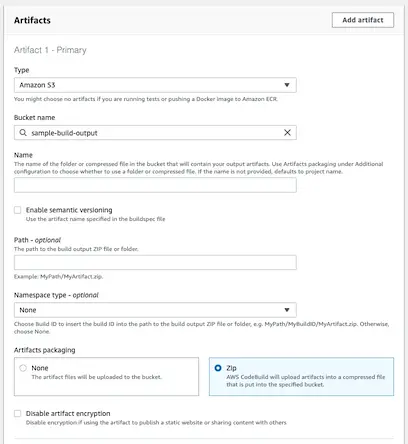

Now that we can build the project we need to upload the output of the build to somewhere so that we can deploy it. Currently you can only choose Amazon S3 to upload the artifacts or no artifacts at all.

If you had created a bucket already you can simply select it from the dropdown list.

The options are pretty basic. You can choose to compress the output and upload as a single zip file or the artifacts you defined in the buildspec in their original format.

You can also choose to create a folder named as the build GUID so that you can keep the unique outputs for each release. You can do this by specifying Build ID as the namespace type.

When you’re running the build make sure to view the full logs to get the latest status quickly. I noticed that if you just watch the main page and check out the results you may experience some delays.

Conclusion

In my experience CodeBuild doesn’t provide too much to help with the build process because by nature it’s a generic tool. But it provides flexibility and full integration with other AWS services such as CodeCommit and CodeDeploy so I believe it’s worht using.