C# 6.0 New Features - Auto-Properties with Initializers

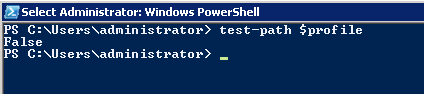

Currently, in Visual Studio 2013, if you have a line like this

public int MyProperty { get; }

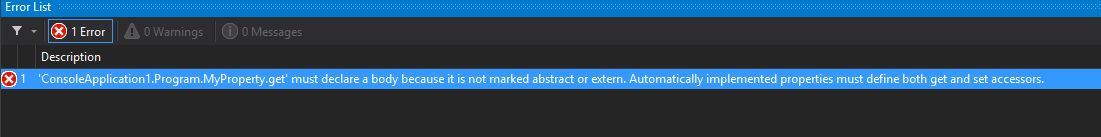

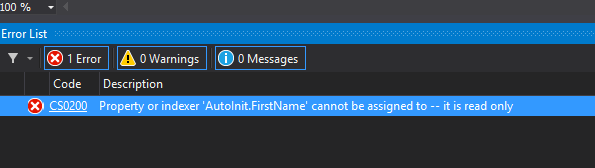

you’d get a compilation error like this:

But the same code in VS 2015 compiles happily. The reason to add this feature is to not get in the way of immutable data types.

Another new feature about auto-properties is initializers. For example the following code would compile and run with new C#:

public class AutoInit

{

public string FirstName { get; } = "Unknown";

public string LastName { get; } = "Unknown";

public AutoInit()

{

Console.WriteLine(string.Format("{0} {1}", FirstName, LastName));

FirstName = "Volkan";

LastName = "Paksoy";

Console.WriteLine(string.Format("{0} {1}", FirstName, LastName));

}

}

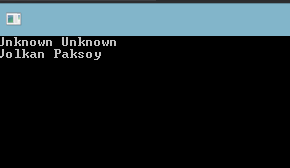

and the output is unsurprisingly looks like this:

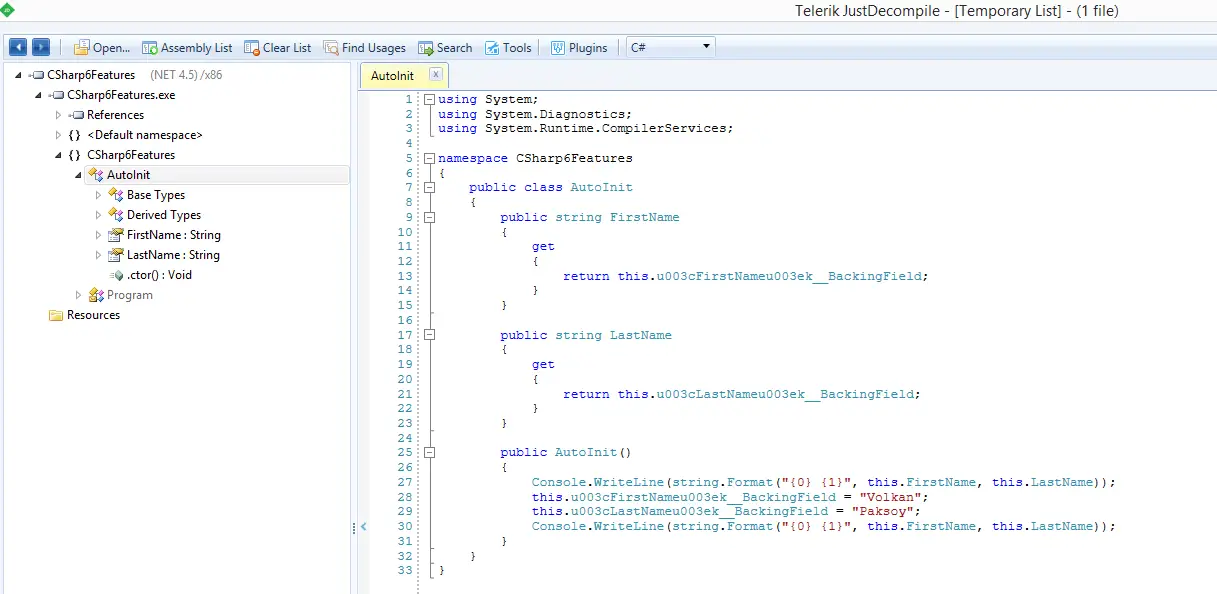

When I first ran this code successfully I was surprised how I managed to set values without a setter. Looks like under the covers it’s generating a read-only backing field for the property and just assignning the value to the field instead of calling the setter method. It can easily be seen using a decompiler:

As it’s a read-only value it can only be set inside the constructor. So if you add the following method it wouldn’t compile:

public void SetValue()

{

FirstName = "another name";

}

It’s a small improvement providing an alternative way to write the same code in less lines.