Backing up GitHub Account with PowerShell

Having lots of projects and assets stored on GitHub I thought it might be a good idea to create periodical backups of my entire GitHub account (all repositories, both public and private). The beauty of it is since Git is open source, this way I can migrate my account to anywhere and even host it on my own server on AWS.

Challenges

With the above goal in mind, I started to outline what’s necessary to achieve this task:

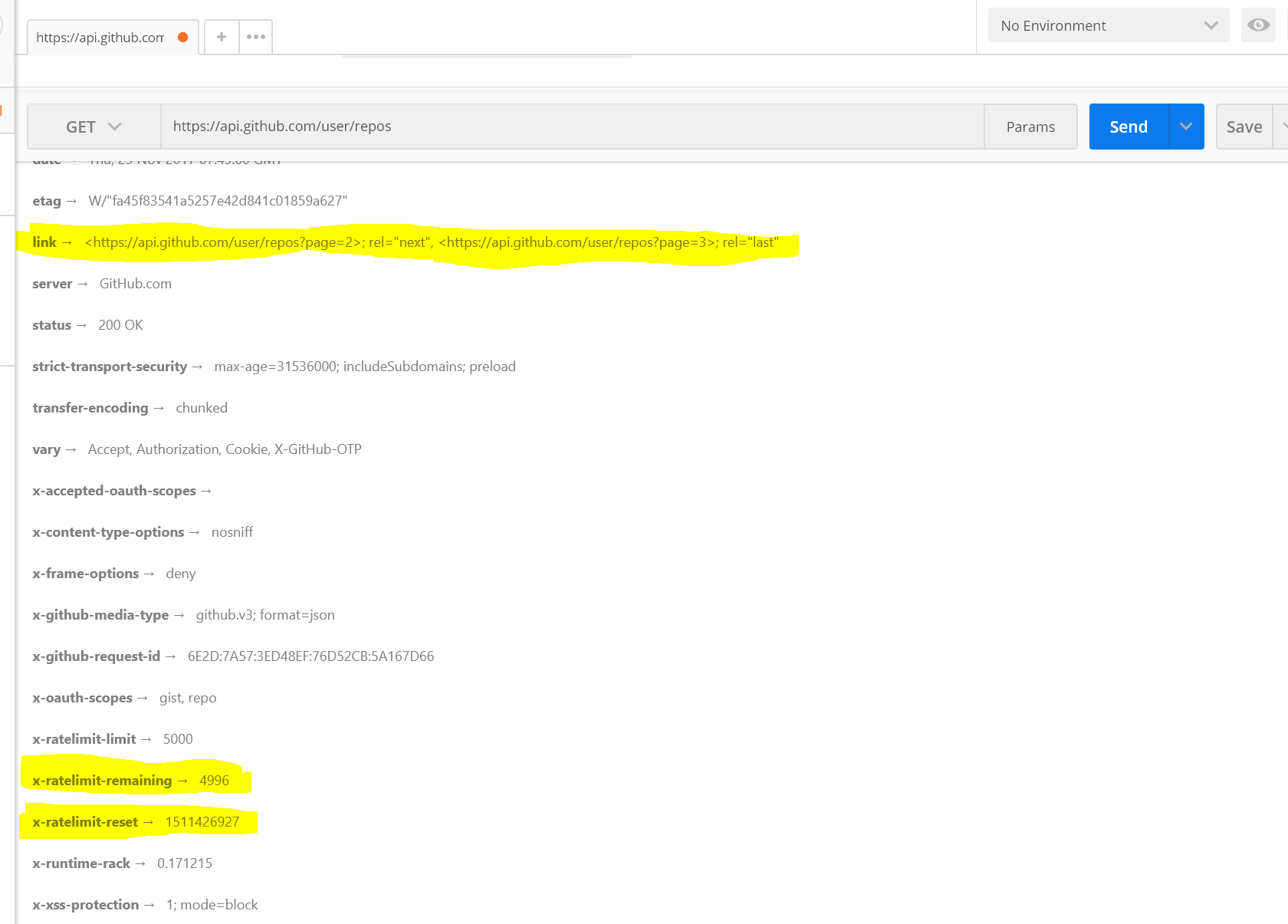

- Automate calling GitHub API to get all repos including private ones. (Of course one should be aware of GitHub API rate limits which is currently 5000 requests per hour. If you use up all your allowance with your scripts you may not be able to use it yourself. Good thing is they are returning how many calls are left before you exceed your quota in x-ratelimit-remaining HTTP header in their responses.)

- Pull all the latest versions for all branches. Overwrite local versions in cases of conflict.

- Find a way to easily transfer a git repository (A compressed single file version rather than individual files) if transferring to another medium is required (such as an S3 bucket)

With these challenges ahead, I first started looking into getting the repos from GitHub:

Consuming GitHub API via PowerShell

First, I shopped around for existing libraries for this task (such as PowerShellForGitHub by Microsoft but it didn’t work for me. Basically I couldn’t even manage the samples on their Wiki. It kept giving cmdlet not found error so I gave up.)

Found a nice video on Channel 9 about consuming REST APIs via PowerShell which uses GitHub API as a case study. It was perfect for me as my goal was to use GitHub API anyway. And since this is a generic approach to consume APIs it can come handy in the future as well. It’s quite easy using basic authentication.

Authorization

First step, is to create a Personal Access Token with repo scope. (Make sure to copy the value before you close the page, there is no way to retrieve it afterwards.)

After the access token has been obtained, I had to generate authorization header as shown in the Channel 9 video:

$token = '<YOUR GITHUB ACCOUNT NAME>:<PERSONAL ACCESS TOKEN>'

$base64Token = [System.Convert]::ToBase64String([char[]]$token)

$headers = @{

Authorization = 'Basic {0}' -f $base64Token

};

$response = Invoke-RestMethod -Headers $headers -Uri https://api.github.com/user/repos

This way I was able to get the repositories including the private ones but by default it returns 30 records on a page so I had to traverse over the pages .

Handling pagination

GitHub sends the next and the last page URLs in link header:

<https://api.github.com/user/repos?page=2>; rel="next", <https://api.github.com/user/repos?page=3>; rel="last"

The challenge here is that looks like Invoke-RestMethod response doesn’t allow to access headers which is a huge bummer as there are useful info in the headers as shown in the screenshot:

At this point, I wanted to use PSGitHub mentioned in the video but as of this writing it doesn’t support getting all repositories. In fact in a note it says “We need to figure out how to handle data pagination” which made me think we are on the same page here (no pun intended!)

GitHub supports a page size parameter (e.g. per_page=50) but the documentation says the maximum value is 100. Although it is tempting to use that one as that would bring all my repos and leave some room for the future ones as well I wanted to go with a more permanent solution. So I decided to request more pages as longs as there are objects returning like this

$page = 1

Do

{

$response = Invoke-RestMethod -Headers $headers -Uri "https://api.github.com/user/repos?page=$page"

foreach ($obj in $response)

{

Write-Host ($obj.id)

}

$page = $page + 1

}

While ($response.Count -gt 0)

Now in the foreach loop of course I have to do something with the repo information instead of just printing the id.

Cloning / pulling repositories

At this point I was able to get all my repositories. GitHub API only handles account information so now I needed to able to run actual git commands to get my code.

First I had installed PowerShell on Mac which is quite simple as specified in the documentation:

brew tap caskroom/cask

brew cask install powershell

With Git already installed on my machine, all is left was using Git commands to clone or update repo on PowerShell terminal such as:

git fetch --all

git reset --hard origin/master

Since this is just going to be a backup copy I don’t want to deal with merge conflicts and just overwriting everything local.

Another approach could be deleting the old repo and cloning it from scratch but I think this would be a bit wasteful to do it everytime for each and every repository.

Putting it all together

Now that I have all the bits and pieces I have glue them together in a meaningful script than can be scheduled and here it is:

Conclusion and Future Improvements

This version accomplishes the basic task of backing up an entire GitHub account but it can be improved in a few ways. Maybe I can post a follow up article including those improvements. A few ideas come to mind are:

- Get Gists (private and public) as well.

- Add option to exclude repos by name or by type (i.e. get only private ones or get all except repo123)

- Add an option to export them to a “non-git” medium such as an S3 bucket using git bundle (which turns out to be a great tool to pack everything in a repository in a single file)

- Create a Docker image that contains all the necessary software (Git, PowerShell, backup script etc) so that it can be distributed without any setup requirements.